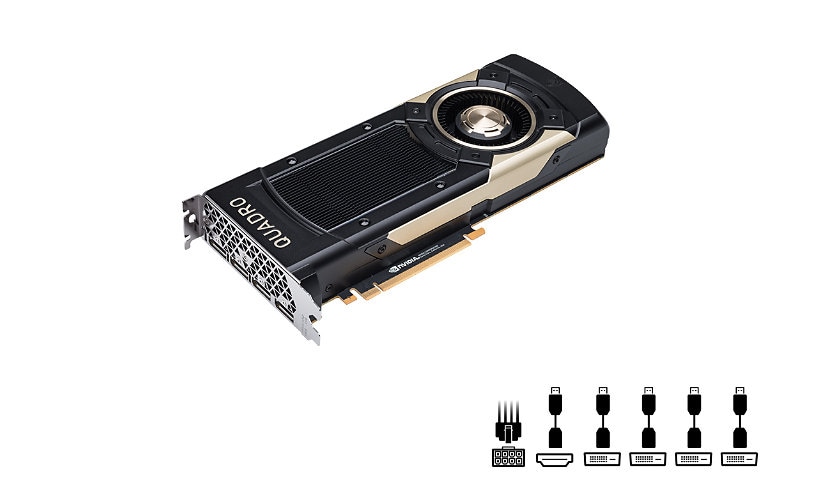

Quick tech specs

- Graphics card

- 32 GB HBM2

- 4 x DisplayPort

- Quadro GV100

- PCIe 3.0 x16

- Adapters Included

Know your gear

AI, photo realistic rendering, simulation, and VR are transforming professional workflows. Engineers can now create groundbreaking products faster. Architects can design buildings that could only have existed in their imaginations. And artists can render complex photorealistic scenes in seconds instead of hours. As applications continue to be enhanced with these technologies, professional computing tools need to keep pace.

The NVIDIA Quadro GV100 is reinventing the workstation to meet the demands of these next-generation workflows. It's powered by NVDIA Volta, delivering the extreme memory capacity, scalability, and performance that designers, architects, and scientists need to create, build, and solve the impossible.

Based on a state-of-the-art 12nm FFN high-performance manufacturing process customized for NVIDIA to incorporate 5120 CUDA cores, the Quadro GV100 GPU is the most powerful computing platform for HPC, AI, VR and graphics workloads on professional desktops. Able to deliver more than 7.4 TFLOPS of

The NVIDIA Quadro GV100 is reinventing the workstation to meet the demands of these next-generation workflows. It's powered by NVDIA Volta, delivering the extreme memory capacity, scalability, and performance that designers, architects, and scientists need to create, build, and solve the impossible.

Based on a state-of-the-art 12nm FFN high-performance manufacturing process customized for NVIDIA to incorporate 5120 CUDA cores, the Quadro GV100 GPU is the most powerful computing platform for HPC, AI, VR and graphics workloads on professional desktops. Able to deliver more than 7.4 TFLOPS of